AI’s power in public safety hiring and screening

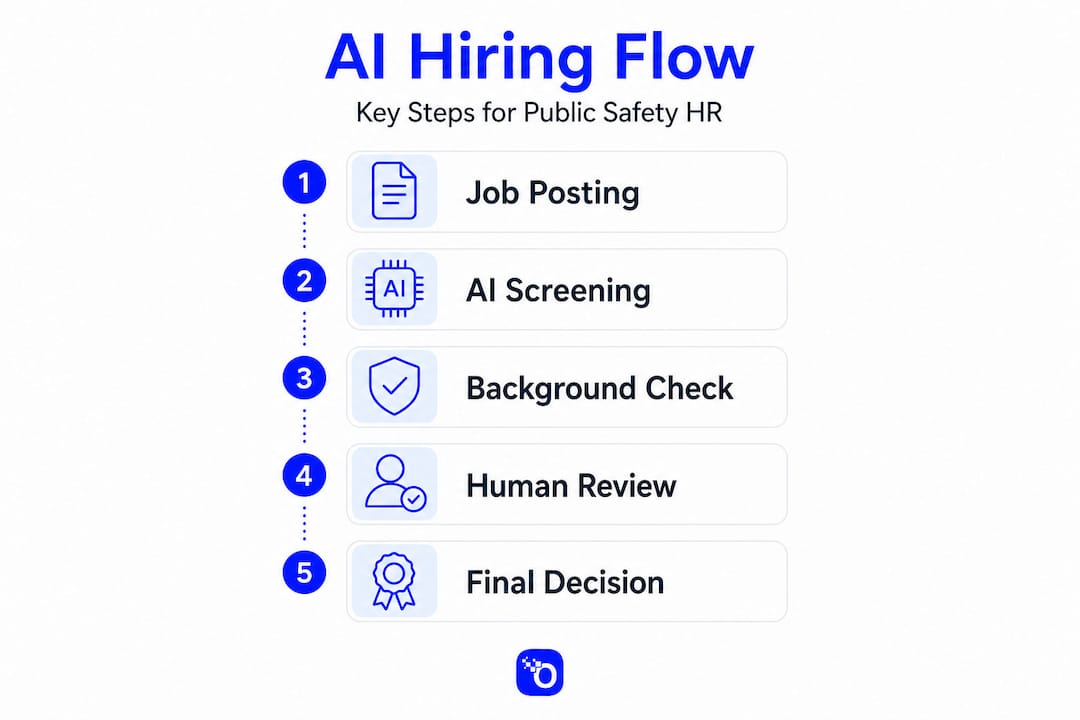

Public safety agencies are under mounting pressure to recruit faster, screen more rigorously, and reduce the risk of placing the wrong person in a high-stakes role. AI in recruitment cuts hiring time by 85% for public safety agencies, a figure that sounds almost too good to be true, yet the technology behind it brings equally significant responsibilities. This guide cuts through the noise to give HR professionals in law enforcement, fire and EMS, dispatch, and government agencies a clear, practical map for adopting AI-driven tools without compromising integrity, compliance, or community trust.

Table of Contents

- The evolution of AI in public safety hiring

- Ensuring fairness, compliance, and auditability

- Risk-based procurement and implementation

- Addressing edge cases: Identity fraud and anti-fraud strategies

- Building sustainable, human-centered AI processes

- What most guides miss: Hard-won lessons for AI in public safety HR

- Enhance your screening: Proven solutions for public safety hiring

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI boosts efficiency | AI can drastically speed up public safety hiring by automating screenings and interviews. |

| Compliance is non-negotiable | Agencies must continuously monitor AI tools for fairness and legal compliance, especially regarding protected classes. |

| Human oversight matters | AI should complement—never replace—human judgement, empathy, and ethical review in high-stakes hiring. |

| Fraud risks persist | Even advanced AI checks must include robust anti-fraud and identity verification steps to prevent false credentials. |

| Ongoing monitoring needed | Annual system review and validation are essential for maintaining safe and equitable AI hiring processes. |

The evolution of AI in public safety hiring

Artificial intelligence is no longer a distant concept reserved for Silicon Valley talent teams. It is a silent force reshaping public safety recruitment in real time. AI speeds up hiring by automating tasks that once consumed weeks of HR staff time, from parsing thousands of applications to scheduling interviews and flagging incomplete submissions.

What makes this shift especially consequential for public safety is the volume and complexity of the hiring process. A single law enforcement agency may receive hundreds of applications for a small number of positions, each requiring extensive pre-employment vetting. AI handles volume effortlessly. It also brings standardization, applying the same evaluation criteria to every candidate without the inconsistencies that naturally arise when multiple reviewers assess applications by hand.

AI interviewing in government contexts demonstrates that these tools can standardize and accelerate initial screening at scale, a capability particularly valuable for agencies with limited HR staff and high applicant volumes. That standardization matters beyond efficiency. It reduces the likelihood that a qualified candidate is missed simply because a reviewer was fatigued or operating under implicit assumptions.

Key benefits agencies are experiencing include:

- Faster time-to-hire: Automated resume parsing and initial screening reduce the days between posting and first interview.

- Consistent scoring: Structured AI evaluation applies identical criteria to every candidate, creating a defensible, uniform record.

- Scalable processing: Agencies running a single recruitment cycle with 500 applications gain as much value from AI as those running continuous hiring.

- Early flag detection: Pattern recognition tools can identify anomalies in application data, such as unexplained employment gaps or credential inconsistencies, before a background investigator spends time on a file.

However, tech-driven recruitment also introduces challenges that demand deliberate management. Process integrity, human oversight, and legal compliance cannot be afterthoughts when the end result is placing an officer in a community or a dispatcher in an emergency communication center. The technology is transformative. The responsibility is equally large.

Ensuring fairness, compliance, and auditability

Compliance in AI-assisted hiring is not optional, and it is not simple. When AI affects protected classes in employment decisions, regulators expect employers to understand how their AI tools behave and to continuously evaluate for disparate impact risk. This expectation is not a vague aspiration. It is an enforceable standard under Title VII of the Civil Rights Act, and public safety agencies are not exempt.

Disparate impact occurs when a neutral policy or practice produces significantly different outcomes for members of a protected class compared to the general applicant pool. An AI tool that favors certain writing styles, educational institutions, or credential formats may inadvertently screen out qualified candidates from underrepresented communities. The agency may never intend that result, but intent is not the legal standard. Outcome is.

The Americans with Disabilities Act adds another layer. AI and the ADA intersect in ways many HR teams do not anticipate. If an AI screening tool evaluates candidates using data that reflects disability-related information, whether that is a gap in employment history corresponding to medical treatment or a nonstandard application format due to an accommodation, the agency must still ensure that individualized assessments and reasonable accommodations are preserved. Automation does not waive ADA obligations.

The following table compares key regulatory requirements that apply to AI-assisted hiring tools in public safety contexts:

| Regulatory framework | Primary concern | Agency obligation |

|---|---|---|

| Title VII (EEOC) | Disparate impact on protected classes | Monitor AI outputs, audit for bias patterns |

| Americans with Disabilities Act | Screening out disabled candidates | Ensure accommodations, individualized review |

| FCRA | Consumer report accuracy and consent | Use FCRA-compliant vendors, obtain written consent |

| State-level AI hiring laws | Disclosure and opt-out rights | Review jurisdiction-specific requirements |

To stay ahead of these obligations, HR professionals should take the following steps before deploying any AI background checks tool in their pipeline:

- Document every tool’s decision logic. Obtain vendor documentation that explains what data inputs the system uses and how they weight outcomes.

- Run a disparate impact analysis at regular intervals. Compare pass rates across gender, race, age, and disability status at every screening stage where AI is used.

- Establish a human review threshold. Determine which decisions require mandatory human review before any candidate advances or is eliminated.

- Create an accommodation request pathway. Ensure candidates who need alternative testing or interview formats can request them before AI evaluation begins.

- Maintain audit trails. Document all AI-assisted decisions, including the criteria applied, the outputs generated, and the human actions taken in response.

Pro Tip: The widely known “4/5ths rule” is a starting point for compliance, not a finish line. Compliance essentials for public safety agencies require going beyond that initial inference. A pass rate that clears the 4/5ths threshold may still mask statistically significant disparities within subgroups. Combine the rule with regression analysis and qualitative review of your screening criteria to build a defensible, legally sound position.

Review public safety compliance frameworks regularly, especially as new EEOC guidance evolves. Agencies that conduct annual compliance reviews fare significantly better during audits and litigation than those that review only when a complaint arises.

The ADA screening integration challenge is one that many agencies discover only after deploying a tool. Building accommodation pathways into the platform architecture from the start is far less disruptive than retrofitting them after a complaint.

Risk-based procurement and implementation

Not every AI tool carries the same risk profile. A tool that schedules interviews carries lower risk than one that ranks candidates based on psychological indicators or predicts future job performance. Public safety agencies must classify AI systems by their potential impact before procurement, not after.

A risk-based procurement framework for criminal justice contexts identifies a clear sequence: define the problem and assess readiness, classify the system’s risk level and opportunity, secure procurement protections, implement with appropriate safeguards, and monitor continuously with reassessment at least once per year. This framework is equally applicable to HR functions within public safety agencies.

The following table outlines key implementation phases and the core questions HR should answer at each stage:

| Implementation phase | Key questions to answer |

|---|---|

| Readiness assessment | Is our data infrastructure ready? Do staff understand how to interpret AI outputs? |

| Vendor procurement | Does the vendor provide bias audit results? Is the contract FCRA-compliant? |

| Pilot deployment | Are we testing on a representative applicant pool? Who reviews edge cases? |

| Ongoing monitoring | Are we auditing disparate impact quarterly? What triggers a full system review? |

Before selecting a vendor, HR professionals should also examine data privacy in hiring to understand how applicant data is stored, shared, and deleted. Data minimization, meaning collecting only what is necessary for the specific screening decision, is both an ethical standard and a legal safeguard in most jurisdictions.

Digital forensics offers a useful benchmark for understanding what AI-assisted investigation can genuinely achieve. Evidence discovery rates in digital forensic contexts have reached approximately 85% with modern tools, a figure that illustrates both the power and the limitation of automated investigation. Even at 85%, one in six cases involves evidence that requires human follow-up. That gap is where investigator-driven checks remain irreplaceable.

Key questions every agency should resolve before signing a vendor contract:

- Does the vendor have third-party validation of their bias auditing methodology?

- What happens to candidate data after a hiring decision is made?

- Is the system capable of generating an audit log for every screening decision?

- Does the contract specify performance benchmarks and remediation terms if those benchmarks are not met?

- What is the vendor’s policy on algorithmic updates, and how does the agency get notified?

Review the compliance guide for public safety agencies to ensure your procurement process maps to both federal requirements and the specific regulatory environment of your jurisdiction. Procurement shortcuts in AI adoption often cost far more in litigation, remediation, and reputational damage than the time they save upfront.

Addressing edge cases: Identity fraud and anti-fraud strategies

One of the most underestimated vulnerabilities in public safety hiring is identity fraud. Identity fraud and credential fabrication can undermine both traditional and AI-assisted background checks, and this is not a rare occurrence. Sophisticated applicants have successfully presented fabricated law enforcement certifications, falsified prior employment records, and manipulated digital identity documents in ways that pass automated verification.

The risk is acute in public safety because the consequences of a fraudulent hire extend far beyond a bad employee. A fabricated police officer certification or a falsified paramedic license puts community members at direct physical risk. The agency faces civil liability, regulatory penalties, and lasting reputational harm.

“Background checks confirm what is in the record. They do not independently verify that the person presenting the records is who they claim to be. That gap is where identity fraud lives.”

AI-assisted background checks can process large volumes of records quickly and flag inconsistencies across data sources. But they rely on the accuracy of the underlying data. If an applicant submits a fraudulent Social Security Number tied to a real but deceased individual, or presents a credential that was never actually issued, the AI tool will often accept what the data source returns.

Document authentication for fraud prevention has become a critical layer in the verification process. Modern document authentication tools examine metadata, font consistency, digital watermarks, and document structure to detect fabrication. These methods go far beyond checking whether a number appears in a database.

Effective anti-fraud strategies for public safety hiring include:

- Multi-source identity verification: Cross-reference Social Security Numbers against credit bureau records, government identity databases, and prior employer records simultaneously.

- Biometric verification at key stages: Implement fingerprint or facial recognition confirmation at initial application and again at the conditional offer stage to confirm consistent identity throughout the process.

- Credential primary-source verification: Contact the issuing institution directly for every license or certification, rather than accepting a copy provided by the applicant.

- Digital document authentication: Apply forensic-grade document analysis to all submitted credentials before they enter the background investigation file.

- Layered investigator review: Assign a human investigator to review all digital verification results on high-risk roles, specifically command positions, undercover assignments, and roles with access to sensitive systems.

Pro Tip: Do not treat a passed background check as proof of verified identity. Use the compliance checklist to build identity verification as a parallel, independent process that runs alongside your background investigation rather than after it. When both processes agree, confidence is high. When they diverge, that divergence is itself a meaningful signal worth investigating.

The most dangerous fraud attempts are not the crude ones. They are the sophisticated, well-constructed submissions that pass automated checks and raise no automated flags. Human investigators with experience in document forensics and identity verification remain the most reliable defense at the edge of what AI tools can detect.

Building sustainable, human-centered AI processes

Efficiency gains from AI are real. They are also insufficient as a standalone justification for deploying automated tools in public safety hiring. AI can improve efficiency and standardize evaluation, but it must not replace human judgment where empathy, fairness, and oversight are central to the decision.

That framing is not a limitation on technology. It is a statement about what public safety hiring is actually for. The goal is not to process applications faster. The goal is to identify individuals with the integrity, judgment, and character to serve in roles where their decisions affect human lives. Speed is a means to that end, not the end itself.

Building a sustainable human-AI hiring process requires deliberate design at each stage. The following steps represent best practices drawn from agencies that have implemented AI tools without sacrificing investigative rigor:

- Define human decision points before deployment. Map your hiring workflow and designate every stage at which a human must review, approve, or override an AI recommendation before the process advances.

- Train HR staff on AI output interpretation. An AI scoring report is not the same as a background investigator’s narrative. HR staff need specific training to interpret what AI outputs mean, what they do not mean, and when to escalate.

- Preserve empathy in candidate-facing interactions. Use AI for back-end processing but maintain human-led conversations with candidates, particularly when discussing sensitive background matters such as prior incidents, gaps in employment, or psychological history.

- Document every override and its rationale. When a human overrides an AI recommendation, record the reason. This documentation protects the agency and builds a dataset for improving the system over time.

- Conduct annual process reviews. Technology changes. Regulatory guidance evolves. Candidate populations shift. Annual reviews ensure that your human-AI balance remains calibrated to current conditions.

The compliance essentials framework reinforces that documentation is not bureaucratic overhead. It is the evidentiary record that defends your agency in the event of a hiring-related complaint, litigation, or audit.

Pro Tip: Resist the temptation to expand AI’s role incrementally without governance checkpoints. Every time AI is given authority over an additional decision point in the pipeline, that expansion should trigger a new bias audit, a new documentation protocol, and a fresh review of the human oversight structure. Unchecked expansion is how agencies end up with AI systems making consequential decisions that no one fully understands or can explain.

What most guides miss: Hard-won lessons for AI in public safety HR

Most discussions of AI in public safety hiring focus on what the technology can do. Fewer address what experienced practitioners have learned from actually deploying it. The gap between the two is where agencies get into trouble.

Speed is the headline benefit of AI recruitment tools, and it deserves its prominence. Reducing time-to-hire by 85% for public safety agencies means getting qualified candidates into service faster, reducing the strain on existing staff, and lowering the cost of prolonged vacancies. These are meaningful outcomes. But speed without auditability is a liability.

Agencies that rush deployment to capture efficiency gains frequently find themselves unable to reconstruct their decision rationale when a rejected candidate files a complaint. An AI system that cannot produce an explainable, auditable output for every screening decision is a legal exposure waiting to materialize. Explainability is not a feature. It is a requirement.

The second overlooked lesson is that human oversight is not a fallback for when AI fails. It is a structural component of a properly designed system. AI is a tool for handling volume, applying consistency, and flagging anomalies. Human investigators bring contextual judgment, ethical discernment, and the ability to recognize patterns that AI systems are not trained to detect. These are not redundant functions. They are complementary.

The third lesson is counterintuitive for agencies focused on modernization. The agencies that implement AI most successfully are often those that invest the most in their human investigative capacity at the same time. They use AI to handle what AI does well, and they free up experienced investigators to apply deeper scrutiny to the cases that most require it. That is not a compromise. That is intelligent resource allocation.

Finally, the most ethical position an agency can take regarding AI in hiring is honesty about what the technology cannot do. It cannot verify character. It cannot measure judgment under pressure. It cannot assess whether a person will act with integrity when no one is watching. Those determinations require human interaction, psychological evaluation, and the kind of investigative depth that no algorithm yet produces.

Enhance your screening: Proven solutions for public safety hiring

For HR teams that have navigated the complexity of AI adoption and are ready to put these principles into practice, the next step is partnering with a platform built specifically for public safety demands.

OMNI Intel’s pre-employment screening services are designed from the ground up for law enforcement, fire and EMS, dispatch, and government agencies. Rather than adapting general commercial tools to a public safety context, OMNI Intel brings investigator-driven methodology to every stage of the background investigation process. The platform’s background checks are FCRA-compliant, continuously updated to reflect regulatory changes, and built to produce the kind of auditable, explainable outputs that agencies need when accountability matters most. Explore the investigation principles that guide every OMNI Intel review and see how they apply directly to your agency’s hiring challenges.

Frequently asked questions

How does AI improve speed in public safety hiring?

AI cuts hiring time by 85% by automating initial screening, interview scheduling, and application parsing, allowing HR teams to reach qualified candidates significantly faster than manual processes permit.

What safeguards are needed to ensure AI doesn’t introduce bias?

Agencies must monitor AI outputs for disparate impact across protected classes, apply structured evaluation criteria, and follow EEOC Title VII guidance rigorously. Satisfying the 4/5ths rule is only the starting point for a defensible compliance position, not the conclusion.

How should AI tools handle disability-related candidate information?

Under ADA requirements, AI screening tools must not eliminate candidates based on disability-related data without individualized assessment, and agencies must maintain accessible accommodation pathways throughout the automated process.

What is a risk-based procurement approach for AI in public safety?

It is a structured sequence that begins with a readiness assessment, classifies the AI system’s risk level, applies appropriate procurement protections, implements with safeguards, and reassesses outcomes at minimum once per year to protect both agency integrity and community safety.

Can AI eliminate identity fraud in background screening?

No. Identity fraud and credential fabrication remain persistent vulnerabilities even in AI-assisted processes, requiring layered verification strategies including primary-source credential checks and digital document authentication alongside automated screening.